Subderivative

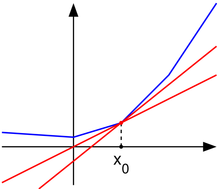

Appearance move to sidebar hide A convex function (blue) and "subtangent lines" at

x

0

{\displaystyle x_{0}}

(red).

A convex function (blue) and "subtangent lines" at

x

0

{\displaystyle x_{0}}

(red).

In mathematics, subderivatives (or subgradient) generalizes the derivative to convex functions which are not necessarily differentiable. The set of subderivatives at a point is called the subdifferential at that point. Subderivatives arise in convex analysis, the study of convex functions, often in connection to convex optimization.

Let f : I → R {\displaystyle f:I\to \mathbb {R} } be a real-valued convex function defined on an open interval of the real line. Such a function need not be differentiable at all points: For example, the absolute value function f ( x ) = | x | {\displaystyle f(x)=|x|} is non-differentiable when x = 0 {\displaystyle x=0} . However, as seen in the graph on the right (where f ( x ) {\displaystyle f(x)} in blue has non-differentiable kinks similar to the absolute value function), for any x 0 {\displaystyle x_{0}} in the domain of the function one can draw a line which goes through the point ( x 0 , f ( x 0 ) ) {\displaystyle (x_{0},f(x_{0}))} and which is everywhere either touching or below the graph of f. The slope of such a line is called a subderivative.

Definition

Rigorously, a subderivative of a convex function f : I → R {\displaystyle f:I\to \mathbb {R} } at a point x 0 {\displaystyle x_{0}} in the open interval I {\displaystyle I} is a real number c {\displaystyle c} such that

f ( x ) − f ( x 0 ) ≥ c ( x − x 0 ) {\displaystyle f(x)-f(x_{0})\geq c(x-x_{0})} for all x ∈ I {\displaystyle x\in I} . By the converse of the mean value theorem, the set of subderivatives at x 0 {\displaystyle x_{0}} for a convex function is a nonempty closed interval {\displaystyle } , where a {\displaystyle a} and b {\displaystyle b} are the one-sided limits a = lim x → x 0 − f ( x ) − f ( x 0 ) x − x 0 , {\displaystyle a=\lim _{x\to x_{0}^{-}}{\frac {f(x)-f(x_{0})}{x-x_{0}}},} b = lim x → x 0 + f ( x ) − f ( x 0 ) x − x 0 . {\displaystyle b=\lim _{x\to x_{0}^{+}}{\frac {f(x)-f(x_{0})}{x-x_{0}}}.} The interval {\displaystyle } of all subderivatives is called the subdifferential of the function f {\displaystyle f} at x 0 {\displaystyle x_{0}} , denoted by ∂ f ( x 0 ) {\displaystyle \partial f(x_{0})} . If f {\displaystyle f} is convex, then its subdifferential at any point is non-empty. Moreover, if its subdifferential at x 0 {\displaystyle x_{0}} contains exactly one subderivative, then f {\displaystyle f} is differentiable at x 0 {\displaystyle x_{0}} and ∂ f ( x 0 ) = { f ′ ( x 0 ) } {\displaystyle \partial f(x_{0})=\{f'(x_{0})\}} .Example

Consider the function f ( x ) = | x | {\displaystyle f(x)=|x|} which is convex. Then, the subdifferential at the origin is the interval {\displaystyle } . The subdifferential at any point x 0 < 0 {\displaystyle x_{0}<0} is the singleton set { − 1 } {\displaystyle \{-1\}} , while the subdifferential at any point x 0 > 0 {\displaystyle x_{0}>0} is the singleton set { 1 } {\displaystyle \{1\}} . This is similar to the sign function, but is not single-valued at 0 {\displaystyle 0} , instead including all possible subderivatives.

Properties

- A convex function f : I → R {\displaystyle f:I\to \mathbb {R} } is differentiable at x 0 {\displaystyle x_{0}} if and only if the subdifferential is a singleton set, which is { f ′ ( x 0 ) } {\displaystyle \{f'(x_{0})\}} .

- A point x 0 {\displaystyle x_{0}} is a global minimum of a convex function f {\displaystyle f} if and only if zero is contained in the subdifferential. For instance, in the figure above, one may draw a horizontal "subtangent line" to the graph of f {\displaystyle f} at ( x 0 , f ( x 0 ) ) {\displaystyle (x_{0},f(x_{0}))} . This last property is a generalization of the fact that the derivative of a function differentiable at a local minimum is zero.

- If f {\displaystyle f} and g {\displaystyle g} are convex functions with subdifferentials ∂ f ( x ) {\displaystyle \partial f(x)} and ∂ g ( x ) {\displaystyle \partial g(x)} with x {\displaystyle x} being the interior point of one of the functions, then the subdifferential of f + g {\displaystyle f+g} is ∂ ( f + g ) ( x ) = ∂ f ( x ) + ∂ g ( x ) {\displaystyle \partial (f+g)(x)=\partial f(x)+\partial g(x)} (where the addition operator denotes the Minkowski sum). This reads as "the subdifferential of a sum is the sum of the subdifferentials."

The subgradient

The concepts of subderivative and subdifferential can be generalized to functions of several variables. If f : U → R {\displaystyle f:U\to \mathbb {R} } is a real-valued convex function defined on a convex open set in the Euclidean space R n {\displaystyle \mathbb {R} ^{n}} , a vector v {\displaystyle v} in that space is called a subgradient at x 0 ∈ U {\displaystyle x_{0}\in U} if for any x ∈ U {\displaystyle x\in U} one has that

f ( x ) − f ( x 0 ) ≥ v ⋅ ( x − x 0 ) , {\displaystyle f(x)-f(x_{0})\geq v\cdot (x-x_{0}),}where the dot denotes the dot product. The set of all subgradients at x 0 {\displaystyle x_{0}} is called the subdifferential at x 0 {\displaystyle x_{0}} and is denoted ∂ f ( x 0 ) {\displaystyle \partial f(x_{0})} . The subdifferential is always a nonempty convex compact set.

These concepts generalize further to convex functions f : U → R {\displaystyle f:U\to \mathbb {R} } on a convex set in a locally convex space V {\displaystyle V} . A functional v ∗ {\displaystyle v^{*}} in the dual space V ∗ {\displaystyle V^{*}} is called the subgradient at x 0 {\displaystyle x_{0}} in U {\displaystyle U} if for all x ∈ U {\displaystyle x\in U} ,

f ( x ) − f ( x 0 ) ≥ v ∗ ( x − x 0 ) . {\displaystyle f(x)-f(x_{0})\geq v^{*}(x-x_{0}).}The set of all subgradients at x 0 {\displaystyle x_{0}} is called the subdifferential at x 0 {\displaystyle x_{0}} and is again denoted ∂ f ( x 0 ) {\displaystyle \partial f(x_{0})} . The subdifferential is always a convex closed set. It can be an empty set; consider for example an unbounded operator, which is convex, but has no subgradient. If f {\displaystyle f} is continuous, the subdifferential is nonempty.

History

The subdifferential on convex functions was introduced by Jean Jacques Moreau and R. Tyrrell Rockafellar in the early 1960s. The generalized subdifferential for nonconvex functions was introduced by F.H. Clarke and R.T. Rockafellar in the early 1980s.

See also

References

- ^ Bubeck, S. (2014). Theory of Convex Optimization for Machine Learning. ArXiv, abs/1405.4980.

- ^ Rockafellar, R. T. (1970). Convex Analysis. Princeton University Press. p. 242 . ISBN 0-691-08069-0.

- ^ Lemaréchal, Claude; Hiriart-Urruty, Jean-Baptiste (2001). Fundamentals of Convex Analysis. Springer-Verlag Berlin Heidelberg. p. 183. ISBN 978-3-642-56468-0.

- ^ Clarke, Frank H. (1983). Optimization and nonsmooth analysis. New York: John Wiley & Sons. pp. xiii+308. ISBN 0-471-87504-X. MR 0709590.

- Borwein, Jonathan; Lewis, Adrian S. (2010). Convex Analysis and Nonlinear Optimization : Theory and Examples (2nd ed.). New York: Springer. ISBN 978-0-387-31256-9.

- Hiriart-Urruty, Jean-Baptiste; Lemaréchal, Claude (2001). Fundamentals of Convex Analysis. Springer. ISBN 3-540-42205-6.

- Zălinescu, C. (2002). Convex analysis in general vector spaces. World Scientific Publishing Co., Inc. pp. xx+367. ISBN 981-238-067-1. MR 1921556.

External links

- "Uses of lim h → 0 f ( x + h ) − f ( x − h ) 2 h {\displaystyle \lim \limits _{h\to 0}{\frac {f(x+h)-f(x-h)}{2h}}} ". Stack Exchange. September 18, 2011.

(red).

(red).

be a

be a  is non-differentiable when

x

=

0

{\displaystyle x=0}

is non-differentiable when

x

=

0

{\displaystyle x=0}

. However, as seen in the graph on the right (where

f

(

x

)

{\displaystyle f(x)}

. However, as seen in the graph on the right (where

f

(

x

)

{\displaystyle f(x)}

in blue has non-differentiable kinks similar to the absolute value function), for any

x

0

{\displaystyle x_{0}}

in blue has non-differentiable kinks similar to the absolute value function), for any

x

0

{\displaystyle x_{0}}

and which is everywhere either touching or below the graph of f. The

and which is everywhere either touching or below the graph of f. The  is a real number

c

{\displaystyle c}

is a real number

c

{\displaystyle c}

such that

such that

for all

x

∈

I

{\displaystyle x\in I}

for all

x

∈

I

{\displaystyle x\in I}

. By the converse of the

. By the converse of the  , where

a

{\displaystyle a}

, where

a

{\displaystyle a}

and

b

{\displaystyle b}

and

b

{\displaystyle b}

are the

are the  b

=

lim

x

→

x

0

+

f

(

x

)

−

f

(

x

0

)

x

−

x

0

.

{\displaystyle b=\lim _{x\to x_{0}^{+}}{\frac {f(x)-f(x_{0})}{x-x_{0}}}.}

b

=

lim

x

→

x

0

+

f

(

x

)

−

f

(

x

0

)

x

−

x

0

.

{\displaystyle b=\lim _{x\to x_{0}^{+}}{\frac {f(x)-f(x_{0})}{x-x_{0}}}.}

The

The  at

x

0

{\displaystyle x_{0}}

at

x

0

{\displaystyle x_{0}}

. If

f

{\displaystyle f}

. If

f

{\displaystyle f}

.

. . The subdifferential at any point

x

0

<

0

{\displaystyle x_{0}<0}

. The subdifferential at any point

x

0

<

0

{\displaystyle x_{0}<0}

is the

is the  , while the subdifferential at any point

x

0

>

0

{\displaystyle x_{0}>0}

, while the subdifferential at any point

x

0

>

0

{\displaystyle x_{0}>0}

is the singleton set

{

1

}

{\displaystyle \{1\}}

is the singleton set

{

1

}

{\displaystyle \{1\}}

. This is similar to the

. This is similar to the  , instead including all possible subderivatives.

, instead including all possible subderivatives.

.

. are convex functions with subdifferentials

∂

f

(

x

)

{\displaystyle \partial f(x)}

are convex functions with subdifferentials

∂

f

(

x

)

{\displaystyle \partial f(x)}

and

∂

g

(

x

)

{\displaystyle \partial g(x)}

and

∂

g

(

x

)

{\displaystyle \partial g(x)}

with

x

{\displaystyle x}

with

x

{\displaystyle x}

being the interior point of one of the functions, then the subdifferential of

f

+

g

{\displaystyle f+g}

being the interior point of one of the functions, then the subdifferential of

f

+

g

{\displaystyle f+g}

is

∂

(

f

+

g

)

(

x

)

=

∂

f

(

x

)

+

∂

g

(

x

)

{\displaystyle \partial (f+g)(x)=\partial f(x)+\partial g(x)}

is

∂

(

f

+

g

)

(

x

)

=

∂

f

(

x

)

+

∂

g

(

x

)

{\displaystyle \partial (f+g)(x)=\partial f(x)+\partial g(x)}

(where the addition operator denotes the

(where the addition operator denotes the  is a real-valued convex function defined on a

is a real-valued convex function defined on a  , a vector

v

{\displaystyle v}

, a vector

v

{\displaystyle v}

in that space is called a subgradient at

x

0

∈

U

{\displaystyle x_{0}\in U}

in that space is called a subgradient at

x

0

∈

U

{\displaystyle x_{0}\in U}

if for any

x

∈

U

{\displaystyle x\in U}

if for any

x

∈

U

{\displaystyle x\in U}

one has that

one has that

. A functional

v

∗

{\displaystyle v^{*}}

. A functional

v

∗

{\displaystyle v^{*}}

in the

in the  is called the subgradient at

x

0

{\displaystyle x_{0}}

is called the subgradient at

x

0

{\displaystyle x_{0}}

if for all

x

∈

U

{\displaystyle x\in U}

if for all

x

∈

U

{\displaystyle x\in U}

"

"